Arrow detection

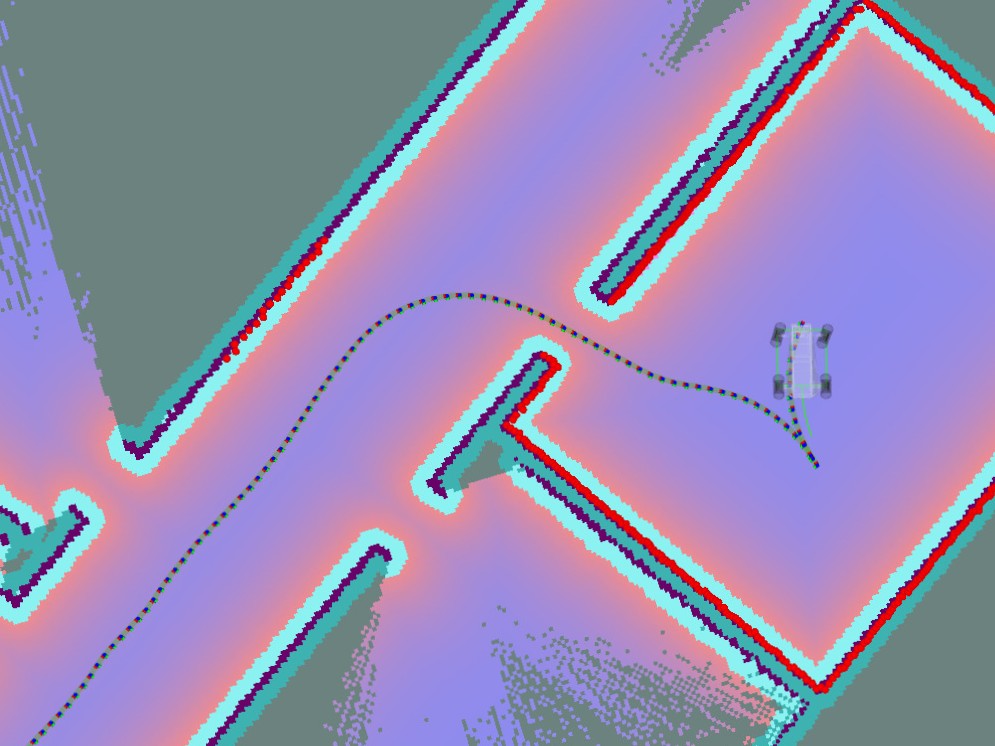

In order to adapt to ambiguous path-finding options, the robot utilizes YOLOv11-based arrow

detection to interpret directional cues from its Intel® RealSense™ D435 depth camera data.

This feature enables the robot to detect and follow arrow signs in real time, thereby providing

the capability to dynamically adjust its route when prompted.

The system works by detecting the geometric shape and specific color of our designated magenta

arrow.

This particular color and design were chosen after experimenting with different arrow versions.

Initially, we used a bright red arrow, but this resulted in numerous false positives, as the camera

often misinterpreted red objects in the environment as arrows. By switching to a magenta-colored

arrow,

we significantly reduced misdetections due to its higher visual contrast in most typical

surroundings.

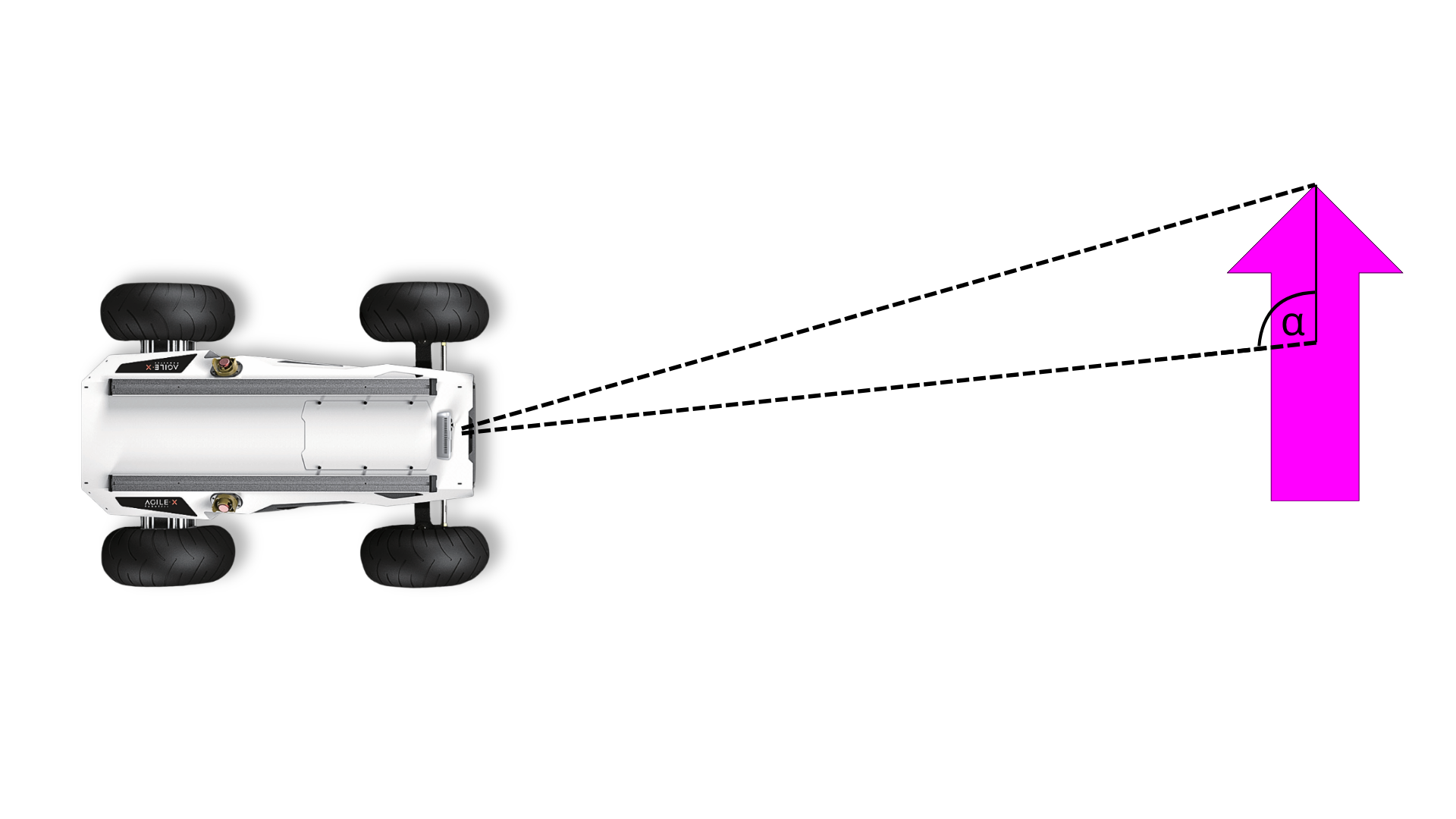

To measure the arrow's orientation, the algorithm identifies two distinct parts of the arrow and

calculates the angle between the midpoints of these detections. This method allows reliable

estimation

of the pointing direction without relying on computationally expensive oriented bounding box (OBB)

machine learning models.

The YOLOv11 model was trained with a dataset of 120 labeled images. We initially used Roboflow

for annotation and labeling but later switched to Label Studio for better control and flexibility.

With this dataset and our tailored approach, we achieved a lightweight and accurate arrow detection

system

optimized for real-time robotic navigation.

Integration into our ROS-based environment required the development of a dedicated ROS node,

which executes the arrow detection and publishes its results to the ROS network. This node provides

three key pieces of information: the orientation angle of the arrow, its distance from the robot,

and its horizontal placement within the camera’s field of view. These values are then consumed by

other ROS nodes to inform the robot's path-planning logic.